The Many Layers of Image Description - Part 1

Inspired by

’s Cameras as Notepads, I thought it was high time for me to *finally* start my own series of articles and I thought I would give Substack a whirl. I am an artist and technologist who researches image description and I work at the intersection of embedded metadata, knowledge graphs and human oriented, data-centric AI.1. Why this, why now

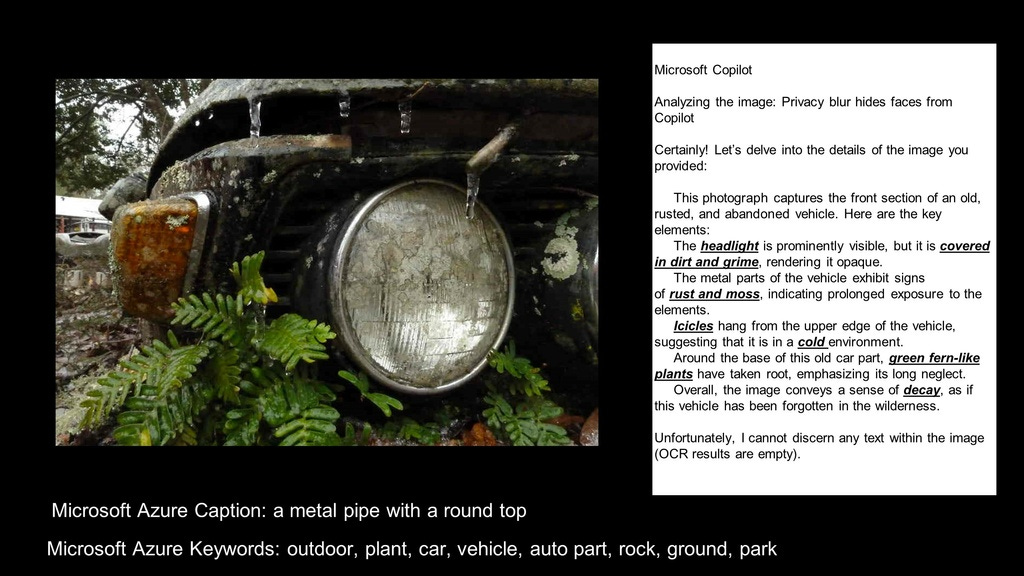

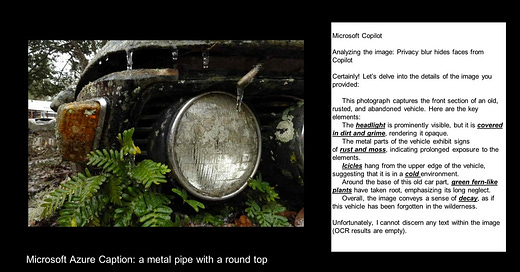

Last week, I was preparing a video for the Tools Track of the Knowledge Graph Conference and created this slide for my video (which I ended up not using in the video). Nevertheless I thought it might be a perfect launching point for a discussion about just how far image description technology has come in a year.

But then…I realized that Substack strips all embedded metadata from images you post on it and thus I’ve now become distracted by the topic of metadata stripping and so I can’t help but start with this subject instead. Consider this image an appetizer for an upcoming Part 2 in a series of newsletters about image description.

2. The First Problem: Metadata Stripping

This is a controversial topic. Let’s just get that on the table right now.

There is definitely the ‘good, the bad and the ugly’ when it comes to metadata and most people just get confused by the word ‘metadata’ anyway. Metadata is just ‘data’. It’s data about things, about stuff, about us. For another attempt at me describing metadata - see this page at my site: Metadata Rocks.

It gets far more complicated than this. There are many more ways to dissect and analyze metadata - from metadata added by cameras, phones and machines to metadata about human behavior, but when I talk about metadata, I’m most likely referring to: the creator, the copyright and the descriptions of digital images you can post on the web.

There are many types of ‘bad metadata’ (metadata that deliberately contains bias, inaccuracies and even hostile intent), but also many types of neutral metadata (like location in the form of GPS coordinates and camera data) that are, unfortunately, OFTEN maliciously used for stalking and doxxing, and it’s this misuse that often gives metadata an even worse reputation than it deserves.

Other types of metadata —such as the kind that is collected by social media and messaging services about our behaviors on the web - can also be used by any number of organizations for many deceptive and surveillance related agendas all the way from 3rd party and unwanted marketers to governments and political agendas. All of this is bad. It can be intentional or unintentional and/or used circumstantially for bad reasons.

However, it is as a result of these misuses of some types of metadata, that there are now many people who have been preconditioned to automatically say that ALL metadata from ALL images is bad and that it should ALL be stripped by default - especially in most social media platforms.

The awareness of the problems of ‘bad’ metadata, such as embedded location data, is so pervasive that it’s actually been a central plot point in several Hallmark movies. In the predictable format, the conflict between the soon-to-be couple comes down to the disclosure of a beautiful hidden locale inadvertently sent as an embedded GPS coordinate to a 3rd party who releases it to the world!

The social media platforms themselves win in multiple ways from that “all embedded metadata is bad” perspective (though I would argue they could have learned a lot more about image description all along if they had not done all the stripping for 20 years - but that is another point entirely).

In general, social media wins by stripping metadata because 1) you post more stuff faster (and at smaller footprint for them) and 2) (in my opinion) they have a perfect plausible deniability excuse for all kinds of things that can happen to your creative work once you have given it to them especially without embedded metadata. One example of this is the current on-going generative AI legal muddle between creators, copyright, ‘fair use’ and crawlers/scrapers.

More generally however, I believe that when social media platforms remove - from you - your own concern for your privacy and your own responsibility for your own critical thinking about what you are sharing and why, they have also conveniently removed their own liability and created a free-for-all crawl and grab environment for your creative work.

Does having embedded copyright or other options to prevent it help against the blatant scraping and use of image content - especially content that has been handed over without ANY attached metadata?

We are still waiting on the courts to figure this one out, but I am happy I have always been on the side of embedding copyrights along with some other web based photo sites that have never given up on embedded metadata such as Flickr and my own site, ImageSnippets. I’m also very encouraged by the intent of

who is behind the new photo sharing app, Foto . I am an advisor to Foto which is in Beta and is actively preparing to include the ethical and responsible use of embedded metadata options in their app. They are actively researching the ways in which the metadata can be used only for the benefit of its users to get better services and search options.At the same time that I say all of this, I also think that in many cases - the actual location data - the GPS coordinates should often be removed - even by default, but not without providing options perhaps for it to be left in the images for knowledgeable users.

I do believe that many social media users often just can’t really process what it means to share or not share their location data. Even if they do understand the concerns, camera settings are hard to navigate, can get reset easily and you can never be completely sure in most platforms exactly what metadata is in your image. Further, I think most users would just as soon easily check a box to strip location data because they don’t understand it, at the same time they will accompany the image with a description in the post that reveals exactly where the photo was made, why and when they were there. There is a clear cognitive dissonance in those two actions.

But my personal feeling is that we have thrown the proverbial baby out with the bathwater by taking this broad brush stripping approach. I even go so far as to claim that we have set back image description technology (and opportunities to improve search techniques) by many years with de facto stripping of embedded metadata in images - regardless of how impressive the descriptive results from GPT4 Vision seem to be at this moment in time.

Instead of overly eagerly stripping data, we could have: 1) supported the growth of tools for users to learn to manage their own metadata better, made those tools easy to access and easy to understand. 2) Always given users better options to consider their image metadata, the fields they want to include and allowed them to choose how they want it used 3) simply encouraged good metadata hygiene across many web ecosystems - especially in news organizations - where it really counts, instead of just warning people about the dangers of malicious behavior linked to metadata.

I know this last item #3, sounds about as pleasant as a trip to the dentist. But I believe, ignorance about metadata and/or bad metadata habits is partly to blame for many breakdowns in truth and authenticity across the web especially in the news industry.

Many problems we now deal with daily: poor search results, misinformation, fake news, and the inaccessibility of web content by people who need it the most are just a few of the issues created by ignorance about metadata (or even simple ‘data literacy’) not to mention the growing controversies over the philosophy of ‘truth’ and accuracy in language and communication.

Ultimately, as a result of how we have been conditioned to post images and videos, I know there is probably a very small subset of people on the planet who care about matching metadata to images when they post. These same people seem to only care later when they are trying to figure out what is going on with the Royal Family or maybe when they are curious about an archived image. But when most people post, they have been conditioned to default to the simplest possible mechanism for posting, and many people share images they just don’t care that much about anyway; they don’t care whether they are ripped for generative AI and they don’t care if they will be uninteresting a week from today. They only need to get attention for the 5 minutes those images will cross someone’s feed.

But I strongly believe that the quality of our lives on-line would be better if people were more thoughtful about what they shared and more importantly, how they shared it, and if they had better data literacy in general and platforms would give users more options to exercise their own critical thinking.

I also believe that image searches could be better if embedded metadata were a common practice and not a dark ‘technical’ kind of arcane art form. The web could then develop much stronger ties to more precise image description and context and therefore a stronger relationship to truth and accuracy.

And I certainly wish that companies (like Substack) were more sensitive about re-using the content already embedded in images and make that an option.

3. The Value of Metadata Workflows

Without delving into the deep philosophies about the many ways metadata can be misused, let me just say that not every image posted is being studied for personal information by bad actors. Many people, like myself, are simply sharing images thoughtfully with metadata. They understand the context, intention and implications behind what, why and where they are sharing their creations and they just want that information retained in the image and available for reuse later on down the pipeline.

This is just one example of how stripping ALL metadata is frustrating and inefficient.

As I write my first Substack article, I am already irritated that I will not be able to use images here in the way that I want: that is with my embedded description, context and intention included in my image and connected to my image in such a way that it can travel with the image and be reused.

It’s important to realize that it’s not enough to simply include those details in a description in the post that accompanies the image, because this just separates the metadata from the image content itself. Without a way of the metadata staying linked to it, it cannot easily travel with the image.

In this case, Substack allows me to add a caption and an alt-text field for accessibility, but both of these different kinds of metadata were already present in my image and written in detail. They could have obviously been imported and left in my image as well as my copyright intentions and contact information.

Many people know that the alt-tag field in html is used by screen readers to make the the web more inclusive for those with vision or cognitive disabilities. Platforms also now regularly use the term: alt-text for this type of metadata. But there is also an embedded alt-text field which could be transmitted with image and automatically passed from place to place in the image. From Substack to Twitter to WordPress and to Google or to any other utilities or web services, an image can carry the alt-text information with the image such that any platform could access this information consistently for reuse.

Some people argue that the context of caption/description and alt-text changes across platforms. While there is some bit of truth to this, I would say, it’s not as much as you think. There are ways to author captions and alt-text that would pretty much cover most social media contexts and once imported, it could easily be edited for unique contexts if necessary at all.

In general, an attention to workflow with regards to importing and exporting metadata embedded in images could be immensely useful.

Take the following link:

This is the same image above but shared from ImageSnippets . Unfortunately, substack won’t even resolve a thumbnail from this html link, but if you click on the link, you can flip the image (as if you are looking at the back of a photo) and read a much better caption as well as read (or hear) the alt-text. There is also quite a bit more information, such as links to me as creator, a credit line, date created and other metadata that might be helpful or of interest.

Of course this image is not a masterful work of art - it’s only an illustration, but the point is, I added embedded metadata which was automatically stripped and I was forced to duplicate the alt-text and craft a new caption.

The most annoying point however, is that all of the information embedded in this image which can be seen in the link above, is now permanently gone from/disconnnected from the image.

If I were to share the same image on LinkedIn or Twitter or Bluesky, I would also have to keep duplicating the alt-text over and over and over again. Some services will at least show your caption under an image thumbnail with your link if your metadata is in Open Graph tags (which ImageSnippets does add to the html file coincidentally).

To take the point even further however, descriptive information that is contained within the image itself can often provide a lot more detail and context about an image - just like flipping an actual photograph over to read the descriptions on the back of the photo. It can include valuable and precise identification of objects and details (historic, instructive and utilitarian) which could just generally make search so much better in so many different platforms. Imagine genealogists of the future being able to always peek inside an image to learn more about the people, places or situations in the image.

As is, sites like Ancestry do a good job of importing metadata, but they also don’t leave the information in the image! Meaning you cannot export that data with the image later (or any of the descriptive information you have added to the image in the platform). Sites like iNaturalist also do a good job of handling some kinds of metadata at import - they even have an excellent feature for dealing with location data, but they also strip it from the photo once it’s in their ecosystem, so that you have no way of exporting the image with metadata intact.

4. Mitigating Metadata Stripping

For many years the International Press and Telecommunications Council (IPTC) (of which I am a member of the photo-metadata working group) has been setting standards for how embedded metadata should be transmitted with images. There is a a long history behind the evolution of The IPTC Photo-Metadata Standard. which has had the input of many big news organizations including the NYT, WaPo, and the wire services: AP, Reuters, AFP. Their work has also evolved preceding and alongside innovations from Adobe. Some big Generative AI companies have claimed in recent years that they had never heard of such as thing as embedded metadata, but the fact is, it has existed as long as images have been transmitted across digital or wire services.

The IPTC has also spent a lot of time creating tools for users to do things like evaluate embedded metadata in workflows. There are the IPTC Interoperability Tests as well as an invaluable tool: GetPMD - which allows users to identify embedded metadata in images. GetPMD can be called from a browser window but also called from a browser extension.

The working group also runs tests on Social Media platforms to show which platforms retain metadata: Social Media Tests. This last item, sadly, has become less and less relevant over time, because the culture of the web overwhelmingly strips data by default. It is still useful to use it to learn of trusted social sites where metadata is not stripped (also, the working group is always interested to hear of more of these places to test for the list).

Again, it’s not that I don’t think that there are some very good reasons why location data and even in some high risk situations, all metadata, should not be stripped, it’s just that I think that throwing out ALL mechanisms for preserving useful metadata has been a mistake for many reasons.

A system called ImageSnippets mentioned earlier, allows users to manage their own metadata, write alt-text descriptions with the images for accessibility and write thorough descriptions for images among a number of other functions. It can also allow you to embed many other fields such as creator and copyright and assertions about how you intend for your image to be used (such as including the new IPTC data mining restrictions field). ImageSnippets is designed to not only allow you to share and publish from the system, but also to attach your images to a knowledge graph.

There are of course plenty of other organizations, especially many in the GLAM (Galleries, Libraries, Archives and Museums) sector who have historically and continue to support strong embedded metadata initiatives, but these organizations often face the same exact problems when trying to share images in social media platforms. There is simply a lack of options for preventing images from being orphaned from their metadata.

I’ve recently joined the Research Data Alliance and a newly formed charter called: Collections as Data, so I’m excited by the prospect of collaborating more in the cultural humanities sphere try to help steer standards about image description metadata, and the ethical governance of data based on FAIR standards (Findable, Accessible, Interoperable and Resuable).

Finally, one last word about the Content Authenticity Initiative. The CAI (originating with Adobe) has been getting a huge amount of press these days as it has sought to gather momentum around a Coalition for Content Provenance and Authenticity (C2PA) which was formed to build tooling for publishing images that can carry watermarks, claims and assertions for authenticity. The C2PA methods are definitely a work in progress. They have great goals, but I also have some reservations about how it can all work until some of the finer details are ironed out. Look to this blog for some alternative ideas: Hacker Factor - VIDA - The Simple Life

One thing to observe about the objectives of the Content Authenticity Initiative especially in relation to this article, is that their mission is to attempt to connect a trust layer to content provenance, but it is not necessarily to help metadata be highly reusable or to follow FAIR principles. While it has good intentions, it’s not quite the same as just promoting the simple reuse of metadata.

5. A Possible Shift?

In recent months, Google - who previously stripped metadata in products like Google Plus - has perhaps had a change of heart. Articles like this: https://support.google.com/merchants/answer/14572008 indicate that Google now believes that images should not be stripped - particularly if they are embedded with a Digital Source Type field indicating that they are created with Generative AI.

As I’ve written about previously on LinkedIn, IMO, there is a somewhat self-serving reason for flip-flop, which is a dawning realization over the past year that Generative AI does not perform well on 2nd, 3rd (or more) generation images, so it is suddenly ‘useful’ for Google’s AI to know if the images they scrape have already been generated by AI. The best way to do this is by embedding some metadata that says that it has been created by a machine. In fact Google AI Labs, Image FX is the first Generative AI system I’ve tried that seems to consistently use the IPTC Digital Source Type field correctly identifying the source type with the newscode for the field: ‘TrainedAlgorithmicMedia’. They also provide metadata for the credit line: “Made with Google AI”.

To see this at work, click on this link: An image created by ImageFX . Note: the prompt I used was: ‘an intake manifold for a Porsche 356’ and what it generated is NOT that. That is just another indication of what a long way image description has to go - and actually how Google and others could have a much better idea about what a Porsche 356 intake manifold actually looks like if they had just studied and used more embedded (and structured) metadata all along :-)

To observe these fields: you can flip the image in the link and look at the ‘triples’ and/or you can send the image to the GetPMD site (using the browser extension is easiest) and take a look at the data. The description was was written by me. Credit line and Digital Source Type originated with the creation of the image. In the ImageSnippets system, we are preventing the Digital Source Type field from being edited if it was imported as TrainedAlgorithmicMedia.

Apart from this, Google also started giving guidance around Creator, Copyright and Licensing information embedded with IPTC information as well (as early as 2018).

And just last month, it was announced that Google has officially joined the IPTC as a voting member! This will hopefully have a very beneficial effect for the future of image metadata as they will be able to contribute to the global standards: https://iptc.org/news/google-joins-iptc-the-global-standards-body-of-the-news-media/. I’m very optimistic!

In my next post, I’ll be looking more closely at image description itself from the perspective of how to preserve the knowledge inherit in a description more accurately and effectively.

A long and thought-provoking read. Here are a few I had while reading:

I love the paradigm of virtually "flipping" a photograph to read its extensive metadata on ImageSnippets because it reminds me so much of our old family photo albums. Those low-resolution black-and-white, two-photos-a-year-per-family-member rarities had handwritten metadata on the back. Sometimes, it'd be date and time, sometimes geolocation, sometimes PII, and sometimes highly personal blobs.

---

As far as malicious pics "metanalysis" at scale is concerned, one of the mechanisms of protection I was thinking about is the equivalent of spam protection, which is also the basis for blockchain. In this case, a bit of computational power is required to get access to metadata. This computational expense will be unnoticeable for normal use but will be prohibitive at scale.

---

Coming from an engineering background and dealing with data (and metadata) daily, I can't help but notice a similarity with data protection in the corporate world. Almost every piece of data can be access-controlled through IAM (Identity and Access Management). The data is always there and is never lost, but accessing it is not a free-for-all.

Therefore, dissociating metadata from the data and handling access to both separately could be a way to eat the cake and have it, too.

I can't stalk you as an individual Instagram user, but ImageSnippets can be granted API access to metadata if your intentions are good and you've been vetted by ISO and co.

Am I too naïve?